How Confluent's Schema ID Shift to Kafka Headers Enhances Data Governance

Confluent moves schema IDs from Kafka payloads to headers, simplifying governance, improving cross-format interoperability, and reducing data-metadata coupling in event-driven architectures.

Confluent has introduced a significant change in Apache Kafka by moving schema IDs from message payloads to record headers. This update aims to streamline schema governance and evolution, integrating seamlessly with Schema Registry to improve compatibility across serialization formats and reduce the tight coupling between data and metadata in event-driven architectures. Below, we explore the key questions about this innovation.

What Exactly Did Confluent Change in Kafka’s Schema Handling?

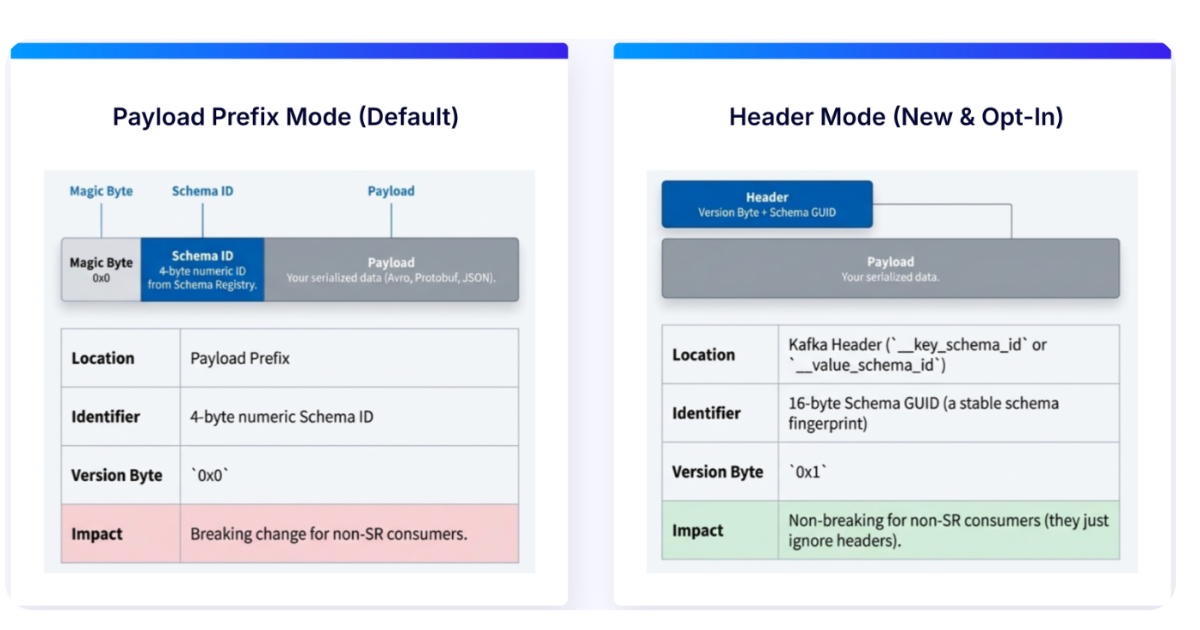

Confluent moved the schema ID—a numeric identifier that points to a specific schema version in Schema Registry—from inside the message payload to the record header. Previously, the schema ID was embedded within the serialized data (e.g., as the first few bytes in Avro format). Now, it sits in a separate header attached to each Kafka record. This shift decouples metadata from the actual data, making it easier to manage schema evolution and governance without altering the payload structure.

Why Is Moving Schema IDs to Headers a Game Changer for Schema Governance?

By placing schema IDs in headers, Confluent simplifies schema governance because metadata (the ID) is no longer intertwined with the message payload. This means that schema versioning, validation, and compliance checks can be performed on the header independently of the payload content. For instance, tools like Confluent Schema Registry can quickly inspect the header without deserializing the payload, reducing processing overhead. Moreover, it allows for more flexible schema evolution policies—such as backward compatibility or transitive compatibility—because the ID is easily accessible without touching the data itself. This decoupling also enables better auditing and lineage tracking, as headers can be analyzed separately for governance purposes.

How Does This Move Improve Compatibility Across Serialization Formats?

Different serialization formats (Avro, Protobuf, JSON Schema) have their own ways of embedding schema IDs. By moving IDs to a standardized location in the record header, Confluent creates a uniform approach that works across all formats. Previously, each format required custom handling to extract the schema ID from the payload. Now, any consumer can read the header regardless of the serialization format, making cross-format interoperability smoother. For example, a Protobuf consumer can read a header from an Avro-produced record without parsing the Avro payload. This reduces integration friction and supports polyglot environments where different services use different serializers.

What Are the Benefits for Event-Driven Architectures?

Event-driven architectures rely on loose coupling between producers and consumers. Moving schema IDs to headers reduces coupling by separating the metadata (schema ID) from the data (payload). This means consumers can evolve their schema handling logic without affecting the payload structure. For instance, if a schema ID retrieval method changes, it only requires updates on the consumer’s header-reading code, not the entire deserialization pipeline. Additionally, headers can carry other metadata (like timestamp or trace ID) alongside the schema ID, enabling richer event processing. This change also improves performance because schema resolution can happen at the header level without needing to deserialize the full message, which is especially beneficial for high-throughput systems.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Does This Update Affect Backward Compatibility with Existing Kafka Streams or Connectors?

Confluent has designed this change to be backward compatible. Existing producers that embed schema IDs in the payload will still work, but new producers can choose to use the header approach. For consumers, the Schema Registry client can be configured to look for the ID in either the payload or header, ensuring a smooth transition. Kafka Streams and connectors that already use Confluent’s serializers will automatically support the new header-based ID once upgraded to compatible versions. This mitigates the risk of breaking existing pipelines while allowing adoption over time. Organizations can migrate gradually by updating producers first, then consumers, without requiring a global flag day.

How Does the Schema Registry Integration Work with Header-Based IDs?

When a producer writes a message with the schema ID in the header, the Schema Registry client (e.g., KafkaAvroSerializer) automatically places the ID in a predefined header key (e.g., confluent.schema.id). The consumer’s deserializer reads this header and retrieves the corresponding schema from the local cache or Registry. This eliminates the need to parse the payload to find the ID, reducing latency. Additionally, the Registry can enforce compatibility checks directly on the header, ensuring that schema evolution rules are met before the data is serialized. This tight integration simplifies deployment and management, as the Registry remains the single source of truth for schema versioning and compatibility.

What Does This Mean for Data and Metadata Coupling in Kafka?

Historically, schema IDs were part of the payload, creating a tight coupling between data and its metadata. This made it difficult to change schema handling without touching the data format. By moving the ID to headers, Confluent reduces this coupling significantly. Headers are distinct from the payload and can be processed, filtered, or inspected without deserializing the message. This separation aligns with the principle of separating concerns in event-driven systems: the payload contains pure business data, while headers carry operational metadata. This not only simplifies schema governance but also enables more modular and maintainable architectures where different components (e.g., routing, monitoring, security) can operate on metadata independently.